This week I’ve been inspired by some licence writing I’m having to do, where I need to justify my experimental approaches. One of my experimental approaches is brain imaging and my justification is that it allows you to see inside the brain without the individual having to be dead. Quite handy from an experimental perspective. So today we’re going to explore different types of brain imaging and how they’re applied. It’s a bit long but stick around, I’ll try and make it interesting. And I shall dedicate this post to several friends of mine who work on brain imaging who will doubtless hate everything about this post and probably call me to tell me how much of it I’ve gotten wrong.

One of the first imaging techniques used to see inside living people was the x-ray. Developed by accident in 1895, x-rays are absorbed by calcium and so bones show up as shadows on the detector. The rest of the body is basically sacs of fluids, air, fats and proteins at various different densities, so they show up with different degrees of opacity on an x-ray image. Those of you paying attention will already realise this isn’t going to be super useful in imaging the brain because it’s essentially hidden in a big block of calcium. And this big block of calcium is filled with a bunch of fluid – the CSF – that, to an x-ray, looks a lot like brain tissue.

So, one of the first ways of imaging the brain was called pneumoencephalography. This drained the CSF and replaced it with air. Yes, actual air. They blew up your skull like a balloon. Surprisingly this was variously quoted as being ‘quite painful’ and ‘not well tolerated in conscious patients’ and also ‘came with significant risks to the individual, including haemorrhage’. Not good. But it did give a more detailed view of the surface of the brain and was good at spotting structural changes produced by things like tumours.

Before their discovery in 1895, X-rays were just a type of unidentified radiation emanating from experimental discharge tubes. They were noticed by scientists investigating cathode rays produced by such tubes in 1869.

To use x-rays to image the brain without blowing it up like a party favour we need lots of them from lots of different angles. This is what a CT scan does. It uses fancy maths to produce slices of the body derived from x-rays fired at you in a spin, and won its creators the Nobel prize in the late 70’s. In the brain, CT imaging is used for big stuff, things like tumours and strokes. This is because blood in the brain, or a tumour, are a different density and therefore show up a different shade of grey on the scan. But the white and grey matter in your brain are also slightly different densities and can be distinguished by CT scanners. This meant that in the late 80’s Hachinski and colleagues were able to spot what they called leukoaraiosis. These are white matter hyperintensities, where areas of the brain appear whiter than normal, and are an important feature of dementia. However, we weren’t going to be able to figure that out until we developed something fancier than an x-ray.

And we were doing that from the 50’s onwards. We sort of tandem developed radiolabelling and magnetic resonance, but radiolabelling is prettier and easier to explain so I’ll start with that. Radioimaging uses radioactive tracers that can be picked up by specific detectors, in a similar manner to x-rays. The advantage of radiolabelling is that you can attach your tracer to whatever you like, or even just use it completely raw. Some of the first studies using this technique injected xenon-133 directly into arteries in the neck and imaged the brain whilst making the patients think about various things, resulting in a paper gloriously entitled ‘Localization of cortical areas activated by thinking’.

The two main types of radioimaging are PET and SPECT, the former measures positrons the latter measures gamma rays. SPECT is generally cheaper and easier. The radiotracers used for SPECT imaging have a longer half-life and the gamma cameras are cheaper. Whereas PET radiotracers have a half-life measured in seconds, rather than hours, and the scanners are in the millions. However, the image resolution from a PET scan is slightly better. One common thing labelled with radioactivity is glucose. 18-flurodeoxyglucose, or 18-FDG, PET scans are usually used to detect tumours, which have a higher metabolic rate than surrounding tissues.

More recently 18-FDG has been used to detect cognitive decline in dementia, where hypometabolism in the hippocampus is a cardinal sign. To make radioimaging more dementia specific, researchers have developed a radiolabelled thioflavin T analogue, known as Pittsburgh compound-B, which can image amyloid deposits in the brain. The issue with this is that quite a lot of the general population have amyloid in their brains as they age so will show Pittsburgh positivity on a scan like this. Flortaucepir has been radiolabelled and used as a tau radiotracer which can be used to image dementia in a similar way and is more sensitive to dementia overall but less sensitive to mild cognitive impairment.

Now we come to MRI, magnetic resonance imaging. Which is complicated. I spent weeks, and I mean weeks revising how this works for my PhD viva. I was asked no questions on it and to this day have failed to retain any of the information.

MRI has a wide range of applications in medical diagnosis and more than 25,000 scanners are estimated to be in use worldwide.

MRI is based on the magnetization properties of atomic nuclei. You use magnets to make all the protons in the body spin in a particular direction. You fling them out of alignment using a radiofrequency pulse and then you let them get back into alignment, with both the magnetic field and with each other, and you measure how much energy they release whilst doing it. You can then assign a greyscale value to that energy signature and you get a picture. Much math and physics is involved that you do not need to know about and which I do not understand.

When we started using this as a technique it was fairly rapidly established that different cells had different relaxation times, so the energy signatures they gave off were different. For example, protons in fat release their energy faster than protons in water. The upshot of this is that different bits of different tissues look different. And they can look different depending on what you fling at them in the first place.

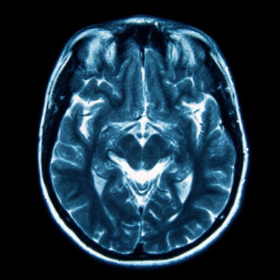

Let me explain. The two main types of images you can get out of an MRI are T1 and T2-weighted. These involve you flinging stuff out of alignment in different ways. What you get back is a rough anatomical image, for T1-weighted sequences and an image more liquid heavy for T2-weighted images. The way to spot them if someone sticks them in front of you is that in a T1-weighted image the white matter is whiter than the grey matter. In T2-weighted images it’s the other way around.

Now, this is the sentence that will get me hate mail from the radiologists. Beyond this point it really just becomes a matter of fiddling with what you fling at the brain until you get pretty pictures showing what you want. You can suppress signals from fat and water, you can label protons in arteries as they go into the brain to look at perfusion, you can look specifically at oxygen levels to determine which bits of the brain light up in response to things, you can add contrast agents that normally don’t get into the brain to look at blood brain barrier breakdown. The sky is the limit. Or rather, the magnetic field strength is the limit. At the moment the resolution for a human MRI scan in a living person is around 1mm. In a dead person it’s around 10-times better but that’s not overly useful in treating disease.

So how is this all used in dementia and where is it going?

For MRI and functional MRI the importance is deviation from the mean. About 10 years ago there was a great study from the guys at the Mayo in Rochester who took a cohort of normal elderly people and scanned their brains, then they scanned the brains of patients with Alzheimer’s, dementia with Lewy bodies and frontotemporal dementia. The resulting paper showed MRI ‘maps’ of the different patient cohorts. Rather than compare everyone to a control group some studies compare patients to themselves as they progress from mild cognitive impairment to full dementia, although a recent meta-analysis has suggested this isn’t spectacularly sensitive.

But what about the future?

3D amplified MRI is a super snazzy technique which allows us to look at the movement of the brain and can be used to look at brain issues associated with changes in fluid dynamics. For example, we could use it to examine the changes in CSF flow which are associated with cognitive decline.

Hyperpolarized imaging gives you extremely pretty pictures using labelled molecules, much like PET, except this time the label isn’t radioactive. The advantage of this technique is that the specific molecules involved in metabolism, like pyruvate and lactate, can be imaged. This gives you a picture of the metabolic health of the brain. Given that we know dementia brains are often hypometabolic, an imaging technique that could be applied repeatedly to patients without fear of radiation poisoning can only be a good thing.

And with increasingly open science big data sets are now available for probing by researchers all over the world. Artificial intelligence is being developed to analyse thousands of images to find patterns or lack thereof. From blowing the fluid out of people’s skulls, to just suppressing the fluid signal on an MRI scan, our capacity to image inside the brain has come a long way.

Dr Yvonne Couch

Author

Dr Yvonne Couch is an Alzheimer’s Research UK Fellow at the University of Oxford. Yvonne studies the role of extracellular vesicles and their role in changing the function of the vasculature after stroke, aiming to discover why the prevalence of dementia after stroke is three times higher than the average. It is her passion for problem solving and love of science that drives her, in advancing our knowledge of disease. Yvonne has joined the team of staff bloggers at Dementia Researcher, and will be writing about her work and life as she takes a new road into independent research.

Print This Post

Print This Post